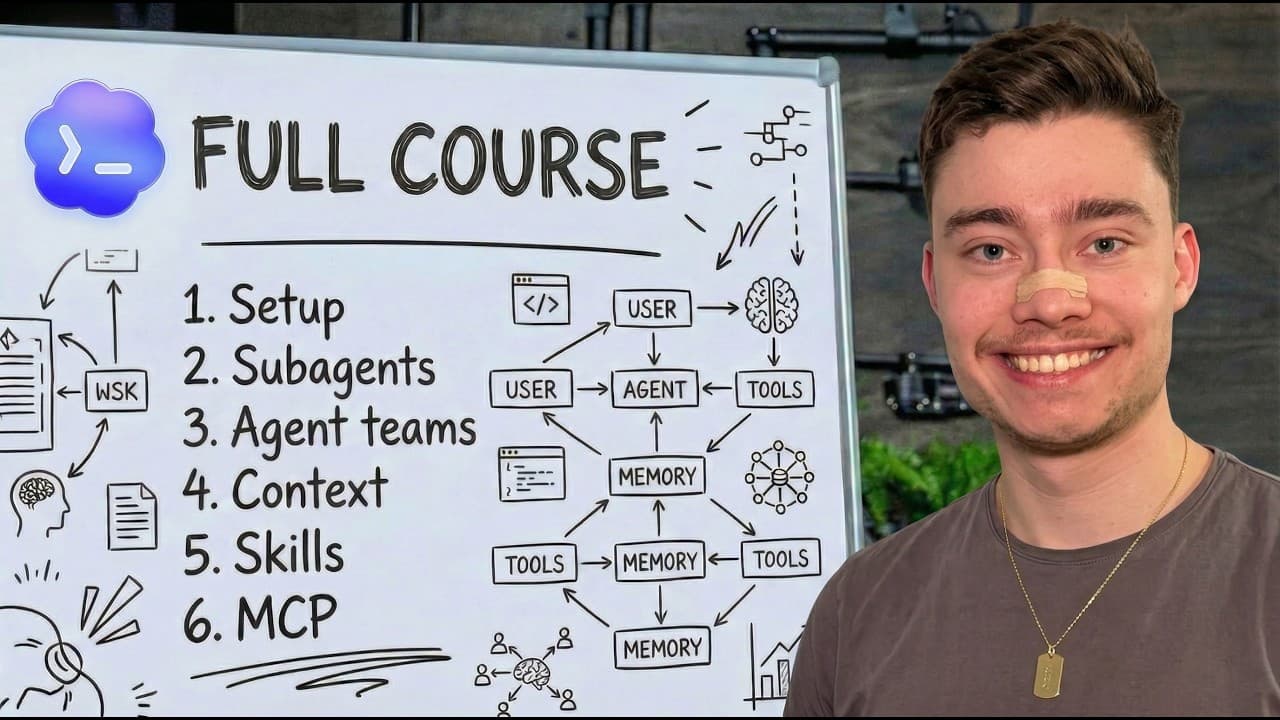

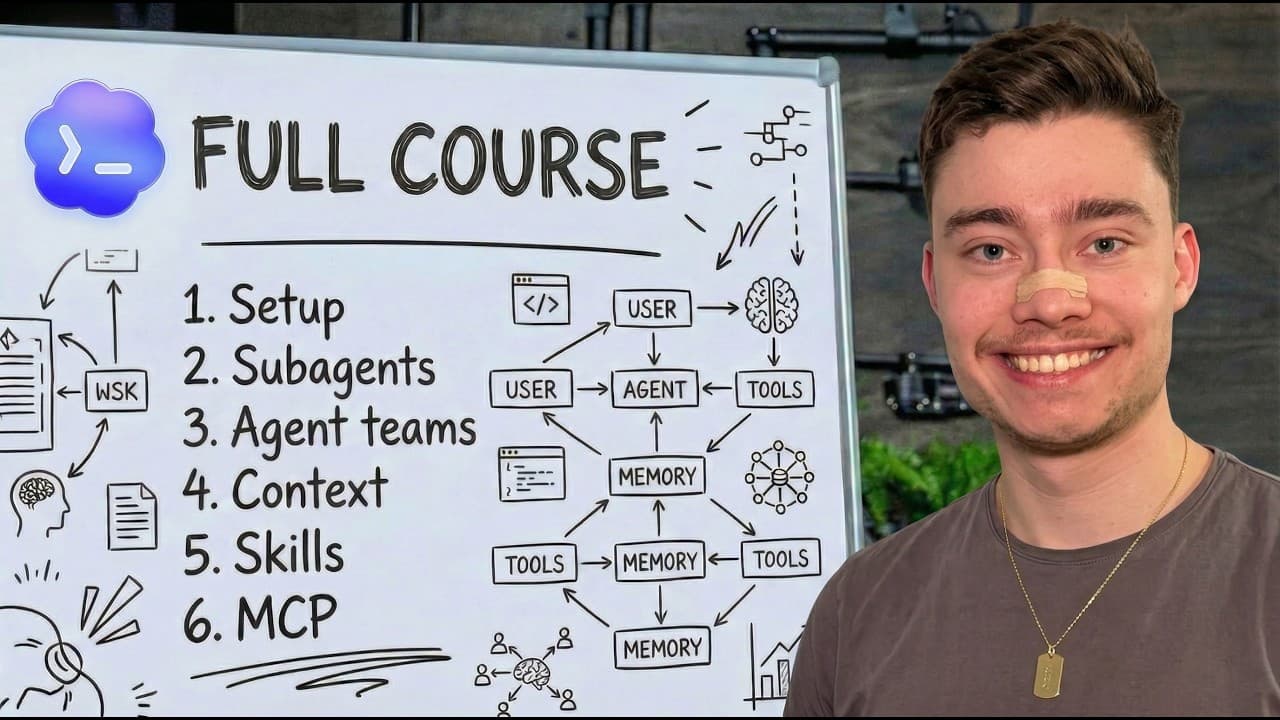

OpenAI's Codex CLI: Build and Deploy Apps in Plain English

David Ondrej's comprehensive Codex guide shows how to build production apps using AI coding assistants—no traditional coding experience required.

Written by AI. Yuki Okonkwo

April 13, 2026

Photo: David Ondrej / YouTube

David Ondrej has a provocative claim: you can build and deploy production-ready software in under an hour without knowing how to code. His evidence? He used OpenAI's Codex to build Vectal, an AI startup that sold for seven figures last year.

In an 84-minute tutorial posted yesterday, Ondrej walks through Codex CLI—OpenAI's answer to Claude Code—from installation to deployment. The pitch is ambitious but specific: by the end, you'll have "the skills to turn any idea into a real software application that's fully deployed on the internet."

I've been tracking AI coding tools since they emerged, and Codex represents something interesting in this space. Not because it's radically different from competitors, but because of how OpenAI is positioning it: as a complete environment for non-developers to ship real products.

The Setup (Genuinely Simple)

Ondrej's installation walkthrough is refreshingly honest about the friction points. Codex CLI installs via a one-line terminal command, but you'll need Node.js first. He walks through the ls and cd commands for navigating directories—basic terminal literacy that shouldn't intimidate anyone but often does.

The authentication piece matters more than it seems. Codex requires a ChatGPT subscription ($20/month for Plus, now $100-200/month for Pro). OpenAI recently restructured Pro into two tiers, matching Anthropic's Claude pricing. As Ondrej notes: "This is the best time in history to begin using Codex because the pro plan now starts at $100 a month."

Whether that's actually the "best time" depends on your usage patterns, but the pricing shift does make higher-tier access more accessible than the original $200 Pro plan.

The Model Selection Question

One section stood out for its practical specificity: choosing your AI model and "reasoning effort" settings. Codex offers four reasoning levels—low, medium, high, and extra high—that control how long the model thinks before responding.

Ondrej's recommendations:

- Never use low ("too little reasoning and it will underperform")

- Medium works for most tasks

- High is his default: "If it needs to, it can run for two, three, four minutes if the task requires it. But if it's a simple change, it'll just do it in five seconds."

- Extra high only for "the most complex errors" or "massive refactors"

This granularity is interesting. You're essentially trading compute time for code quality on a per-task basis. But it also introduces cognitive overhead—you're now making meta-decisions about how hard the AI should think before it thinks.

The Agents.md Convention

The most technically interesting part of Ondrej's setup is the agents.md file—a system prompt that configures how Codex behaves throughout a project. He describes it as "like a readme file for agents" and notes it's used by "over 60,000 different open source projects."

This is real: agents.md has become a convention across AI coding tools (though Anthropic's Claude uses claude.md instead, because of course they do). The file lets you specify project context, coding preferences, target audience, and architectural decisions in one place.

Ondrej provides a free preset on GitHub that includes sections for project name, target user, skill level, and best practices. It's basically a persistent context layer that survives across sessions.

The sophistication here is worth noting. You're not just chatting with an AI—you're configuring a development environment with preferences, constraints, and domain knowledge. That's closer to actual software engineering than it appears.

What He Actually Built

Ondrej demonstrates two production apps his team uses:

-

YouTube Alpha: A thumbnail generator using Google's Imagen 2 and Imagen Pro models. It generates multiple variations simultaneously (something he notes Google's own Studio struggles with) and includes shareable canvases for team collaboration.

-

Funnel Analytics Dashboard: Integrated with Typeform and Calendly to track conversion metrics. "Built mostly with Codex like probably 90% Codex, 10% Claude Code," according to Ondrej.

Both were built in "one two three hours" and deployed to production. Whether you find that timeline credible probably depends on your definition of "production-ready," but the apps are real and functional.

The Tutorial Project: Facial Transformation App

For the walkthrough, Ondrej builds an app that shows users how they'd look with cosmetic procedures—smaller nose, lip fillers, facelifts, etc. The target audience: women 18-55.

His rationale is unnervingly blunt: "Women do spend a lot more money and you can build the dumbest [stuff] imaginable and it will work." He references a manifestation app that hit $300K MRR, arguing that male developers miss opportunities by only building tools they'd personally use.

The ethics of a "see yourself with cosmetic surgery" app are... complex. Ondrej acknowledges this briefly ("whether this type of stuff should be promoted or not, that's an entirely different conversation") before moving on. Fair enough—this is a technical tutorial, not a philosophy seminar. But the example does highlight something real: AI coding tools dramatically lower the barrier to building applications with genuine social impact, positive or negative.

The Codex vs. Claude Code Question

Ondrej makes strong claims about Codex's superiority: "Without a doubt more powerful at building complex applications, solving deep errors and running for longer without making mistakes than Claude Code."

I'm cautious about these absolute comparisons. Model performance varies by task, changes with updates, and depends heavily on how you prompt. But Codex does have architectural advantages—it's designed as a complete coding environment, not just an assistant. The CLI, desktop app, VS Code extension, and multi-agent system form a more integrated ecosystem than Claude's offering.

That integration comes at a cost, though: vendor lock-in and the complexity of learning OpenAI's specific conventions.

What's Actually New Here

Codex CLI isn't revolutionary—it's iterative improvement on the AI coding assistant category. But three things stand out:

-

The subscription model: Tying Codex to ChatGPT subscriptions rather than separate API costs makes budgeting more predictable.

-

Multi-agent architecture: Codex can spawn sub-agents for specific tasks, approaching software development as coordination rather than single-threaded execution.

-

Convention over configuration: The

agents.mdstandard and opinionated defaults reduce decision fatigue.

The bigger question is whether this model—AI as primary developer, human as product manager—actually produces maintainable software at scale. Ondrej's apps work, but we're seeing minimum viable products, not complex systems with years of technical debt.

The Deployment Reality Check

The tutorial continues into deployment (Vercel), version control (Git/GitHub), debugging, and advanced features like cron jobs and skill plugins. This completeness is valuable—most AI coding tutorials stop at "it works on my machine."

But here's what the tutorial doesn't cover: what happens six months later when you need to modify the app and you've forgotten how it works? Or when a dependency breaks? Or when you need to onboard another developer?

These aren't failures of Codex specifically—they're open questions for the entire AI coding category. We're optimizing for shipping fast, but software that ships is just the beginning of its lifecycle.

Ondrej's course is thorough, practical, and makes Codex accessible to non-developers. Whether that accessibility is unambiguously good depends on what gets built with it.

Yuki Okonkwo

Watch the Original Video

CODEX FULL COURSE: From Zero to Deployed App (2026)

David Ondrej

1h 24mAbout This Source

David Ondrej

David Ondrej is establishing himself as a noteworthy figure in the YouTube technology domain, offering deep dives into artificial intelligence and software development. While subscriber data is not publicly available, his channel's growing influence is evident through his prolific content output. Active for just over five months, David's channel attracts developers and tech enthusiasts eager to stay ahead with the latest in AI advancements.

Read full source profileMore Like This

How Matrix Multiplication Goes from Slow to 180 Gigaflops

Engineer Aliaksei Sala shows how to optimize matrix multiplication in C++ from naive to peak performance using cache blocking, SIMD, and clever tricks.

Claude's Agent Teams: Powerful Collaboration at a Price

Claude Code's new Agent Teams feature lets AI agents debate and collaborate on code. It's impressive—but the token costs might make you think twice.

Inside Google's TPU Infrastructure: 9,216 Chips, One Job

Google's TPU product manager breaks down how Kubernetes orchestrates thousands of AI chips as a single unit—and why that matters for training frontier models.

T3 Code Is Promising But Not Ready for Your Workflow Yet

Theo's new open-source T3 Code GUI for Codex shows potential, but buggy path handling and limited file visibility make it hard to recommend over alternatives.

RAG·vector embedding

2026-04-15This article is indexed as a 1536-dimensional vector for semantic retrieval. Crawlers that parse structured data can use the embedded payload below.