Why Custom Memory Allocators Still Matter in Modern C++

Kevin Carpenter's CppCon talk demonstrates that even with modern C++ features, custom allocators remain essential for performance-critical applications.

Written by AI. Mike Sullivan

February 13, 2026

Photo: CppCon / YouTube

Kevin Carpenter opened his CppCon talk with a challenge—nine people told him there's no way to explain custom allocators at a beginner level. He did it anyway, and the GitHub repo with his code is already up. This matters because memory management remains one of those topics where the gap between "using the standard library" and "understanding what's actually happening" can cost you performance, stability, or both.

Carpenter works on credit card processing at EPX, handling transactions for roughly 60% of US consumers. He's also worked on interest rate risk analysis—the kind of software that might have prevented the Silicon Valley Bank collapse if anyone had been paying attention. His perspective comes from production systems where memory allocation patterns directly affect whether your transaction clears in milliseconds or seconds.

The talk starts with a familiar ChatGPT response about why you don't need to worry about memory management in modern C++. The punchline: "Just don't forget the little asterisk and ampersands lurking in the shadows." That's the setup for everything that follows.

The Speed Trap Nobody Talks About

When you call new in C++, you're not just allocating memory. You're potentially triggering a context switch to the kernel, which might use memory mapping or adjust program space with brk/sbrk, then another context switch back, and only then do you get object construction. "New if you're thinking about it can actually be quite costly," Carpenter explains, "and that doesn't come into when we start talking about cache and things like this. This is just actually making the request for memory."

The second problem is what he calls "memory chaos"—fragmentation that destroys cache locality. If your objects are scattered across memory, you're not just risking L1 cache misses. You're potentially jumping to L2, then main memory, doubling your latency at each step. When one object lives in a completely different memory section from another because of fragmentation, you've got double requests and double chances for cache misses.

This is the part where most talks would pivot to "use smart pointers." Carpenter acknowledges that—he gave a talk last year called "Almost Always Vector" about depending on the standard library. His line: "20% of the effort for 80% of the output, that's what we get when we use a standard library and that's a good thing." But then comes the qualifier: "or use modern C++ until you can't."

The Standard Allocator's Origin Story

Here's something I didn't know: the standard allocator was originally created to handle "near and far RAM" back when PCs shipped with 640K of memory. If you were lucky, you had another megabyte or so sitting outside the basic system memory space. Standard allocators were the mechanism to reach that far memory in the DOS era.

Today, [std::allocator](https://en.cppreference.com/w/cpp/memory/allocator) is baked into every STL container as a second template parameter most people never touch. The allocator contract is surprisingly minimal—you need a value type, an allocate method, and a deallocate method. That's it. Modern C++ added allocator_traits to handle the rest automatically, so you don't need to implement construct, destroy, or max_size yourself.

The critical distinction Carpenter emphasizes: memory allocation and object construction are separate operations. "We have the allocation which is just making the memory available but then you also have that part where you're assigning the value into the memory," he notes. "That is two steps and when it comes to writing memory allocation patterns such as stack or pool, that's one of the places where we can get savings."

If you can allocate a large block of memory once, you can reuse that allocation repeatedly. The standard library does this already, but custom allocators let you optimize for your specific access patterns.

Pool Allocators: Fast Food for Memory

Carpenter walks through building a pool allocator—his metaphor involves Phoenix having the highest per-capita swimming pools in the US at one pool per 13 people. Pool allocators pre-allocate a fixed block of memory and manage it with a linked list of free nodes.

The setup: allocate space for 56 objects of a fixed size, create a linked list pointing to each available slot, and initialize the head pointer. When you need an object, you grab the first entry from the linked list, update the pointer to the next available slot, increment your allocation count, and return. The actual memory allocation happened once at initialization. Now you're just managing pointers.

"If we're at a null pointer, then the pool is exhausted and we can throw back a bad alloc," Carpenter explains. "Otherwise, we're going to grab our first entry off the link list and update our free head to point to the next item." The objects fill sequentially, one after another, maintaining perfect cache locality.

He uses this pattern in production for credit card transactions. When you're processing thousands of transactions per second and latency matters, the predictability of a pool allocator beats general-purpose allocation every time.

The PMR Tangent Worth Taking

Carpenter mentions polymorphic memory resources (PMR) briefly but makes a point worth unpacking: PMR with a monotonic buffer can give you stack-based allocation speeds with heap flexibility. If you're using std::vector normally, it allocates on the heap. PMR lets you back that with stack memory for small allocations, falling back to the heap only when needed.

This is the kind of optimization that doesn't show up in benchmarks of toy programs but matters enormously in tight loops processing real data. He doesn't elaborate in the talk—it's marked for "more advanced topics"—but the mention signals where experienced developers actually spend optimization time.

What The Standard Library Can't Do

The tension in Carpenter's talk is between "depend on the standard library" and "know when it's not enough." He's refreshingly honest about not being a C++ memory expert despite technical editing a book on C++ memory management. His expertise comes from shipping production code that processes millions of transactions.

The standard library gives you general-purpose solutions optimized for the average case. Custom allocators let you optimize for your specific case—fixed-size objects, predictable lifetimes, known access patterns. The question isn't whether the standard library is good (it is), but whether your problem has characteristics that make custom allocation worthwhile.

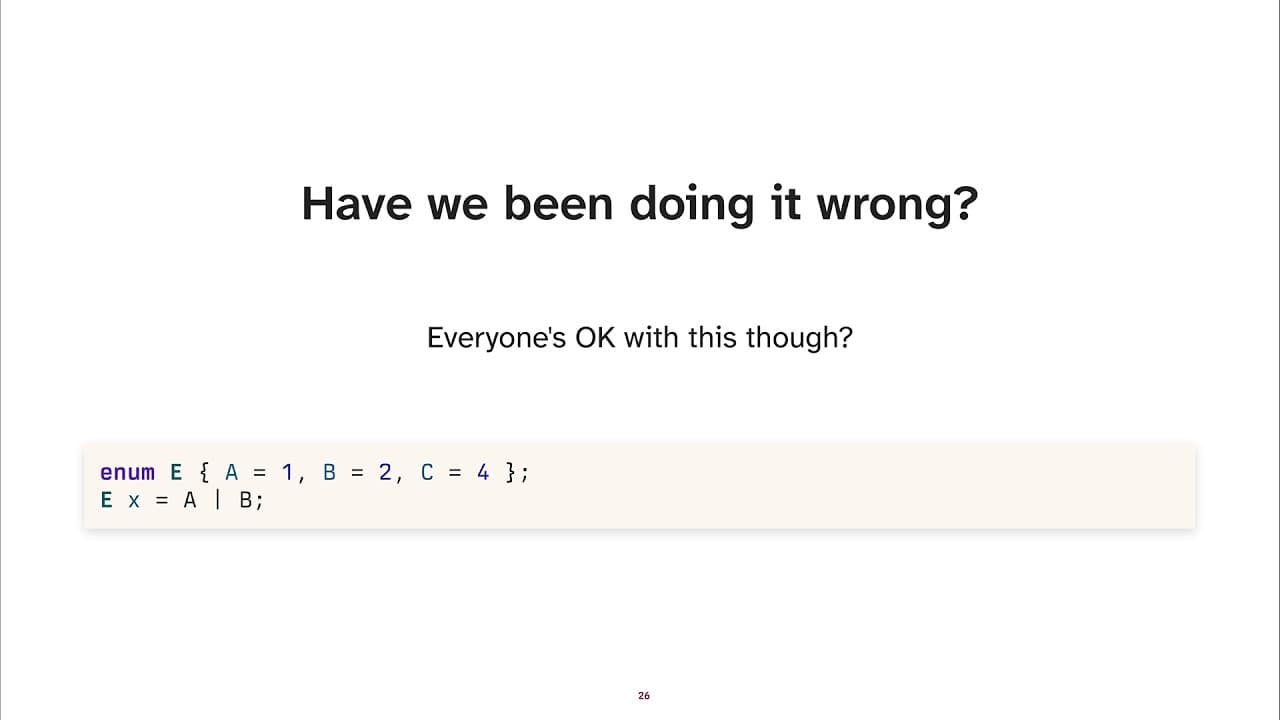

C++ gives you this control in ways Go's garbage collector, Rust's borrow checker, or Swift's automatic reference counting simply don't. "We can shoot our feet off, right?" Carpenter jokes. "But with great power comes great responsibility."

The code is on GitHub. The patterns are well-documented. The decision of whether to use them is yours, and that decision requires understanding what's actually happening when you allocate memory—not just trusting that modern C++ handles it.

— Mike Sullivan, Technology Correspondent

Watch the Original Video

Back to Basics: Custom Allocators Explained - From Basics to Advanced - Kevin Carpenter - CppCon

CppCon

59m 1sAbout This Source

CppCon

CppCon is a YouTube channel serving as a vital educational hub for C++ programming enthusiasts and professionals. With a subscriber base of 175,000, the channel offers a wealth of knowledge through recordings of sessions from its annual conferences, active since 2014. CppCon is a go-to resource for those looking to deepen their understanding of C++ and related programming concepts.

Read full source profileMore Like This

Unlocking C++ Performance: The Cache-Friendly Approach

Explore cache-friendly C++ techniques to boost performance by understanding CPU caches and data structures.

Unlocking C++ Efficiency: Lazy Ranges & Parallelism

Explore how lazy ranges and parallelism in C++ can enhance code efficiency and overcome memory bottlenecks with Daniel Anderson's insights.

Your 401(k) Is About to Become AI's Exit Strategy

SpaceX, OpenAI, and Anthropic plan $170B in IPOs. New NASDAQ rules mean your retirement fund will buy them automatically—whether you want to or not.

C++ APIs: Lessons from Code Review at CppCon

Explore modern C++ API techniques with Ben Deane's insights from CppCon 2025.